By: Gary Audin

Meeting face to face is usually much better than a phone call or audio conference. The use of video conferencing provides a face to face conversation. You learn more with video than audio alone. Video has benefits over audio conferencing

especially during COVID 19.

What You See is Not Sound

When you look at a person or group of people, you are both unconscious and conscious of their behavior. You and the other party(s) are conveying more than what is spoken. Robert masters in his article “Compassionate Wrath: Transpersonal Approaches to Anger,” in the Journal Transpersonal Psychology, vol. 32, no. 1, pp. 31–51, 2000, presented his view of affect, feeling, and emotion.

“Affect is an innately structured, non-cognitive evaluative sensation that may or may not register in consciousness; feeling is affect made conscious, possessing an evaluative capacity that is not only physiologically based, but that is often also psychologically (and sometimes relationally) oriented; and emotion is psychosocially constructed, dramatized feeling.”

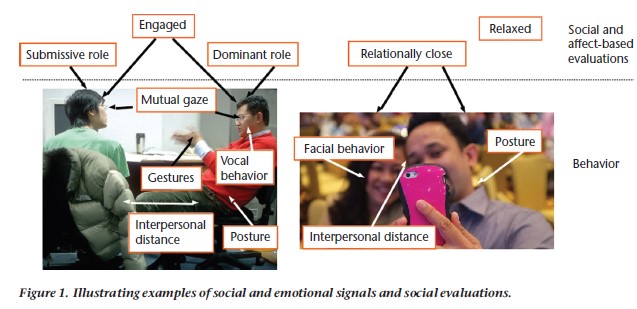

Inspect the picture below to see what part of video that may not be provided in an audio conference. The next two pictures are from “Emotional and Social Signals: A Neglected Frontier in Multimedia Computing”

- Roles – The participants can be both or either dominant or submissive.

- Gesture – Emotion and statements can be made through hand, head, and body gestures to emphasize a point or to intimidate the other participant.

- Eye contact and position – If eye contact is not present, then the person may be avoiding responsibility for a statement or distracted from the conversation or expressing emotion such as rolling their eyes.

- Posture – Sitting up straight can convey confidence, show that the person is under control of themselves, or a slouching posture can demonstrate disinterest or disagreement.

- Distance to the camera – Just like some people who lack confidence, staying away from the camera may indicate lack of confidence or desire not to be part of the video conversation.

- Emotional state – The person could be relaxed, tense, scared, uncomfortable, intimidated, aggressive, assertive, or virtually anything in between.

- Facial expressions – A blank face is hard to read; a poker face. Most of us do not have that type of control all the time, maybe never. The facial expression and the changes that occur during the conversation all provide insight into the other person’s state of mind.

Emotional and Social Signals

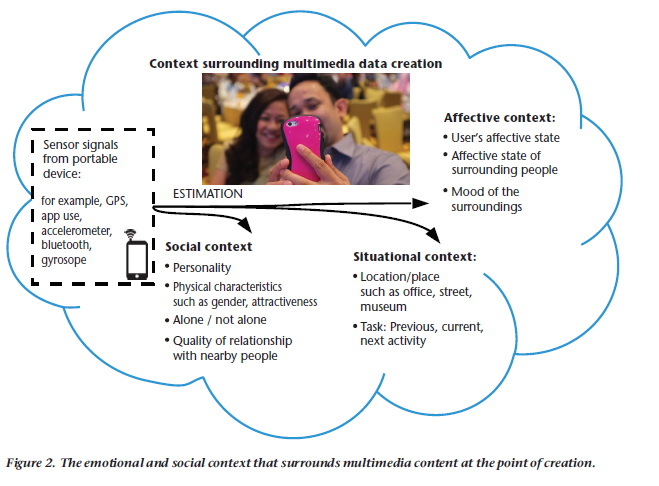

The context surrounding the conversation both visible (for the person’s) and invisible (provided by technology) can further augment the social signals. There are three contexts that surround the video conversation.

- Social context – These are the personalities of the conversation parties, their gender, possibly nationality, attractiveness, whether they are alone or with others who may or may not participate in the conversation. Do they know each other, how well and what is their previous emotional relationship (do they like and respect each other). Even the way they are dressed can affect the conversation. (I am very casual about what I wear below the belt when sitting in a video conference)

- Situational context – Where the parties are located (classroom, office, indoors or outdoors, hotel room, lobby, conference/huddle room, convention center) will influence what is said, conversation security, and attitude. Does the conversation cover a past discussion, a new one, or a future activity?

- Affective context – This is the state of the user, busy, distracted, tired, agitated, awake, too much coffee, or just not interested. The same can be said of those surrounding the speaker. Is the surrounding mood loud, quiet, bright, dimly lit, a lot of physical motion, or just calm?

What Can’t Audio do, Video Can

There are people that can pick many but not all of the social guesses I have attributed video conversations. Most can’t. Video clearly adds to the comprehension of a conversation. The “Emotional and Social Signals” paper mentioned at the beginning of this blog explores the idea that computer recognition of these ques is on the horizon. This may help some people, but I think the human interpretation of social signals will still be superior.